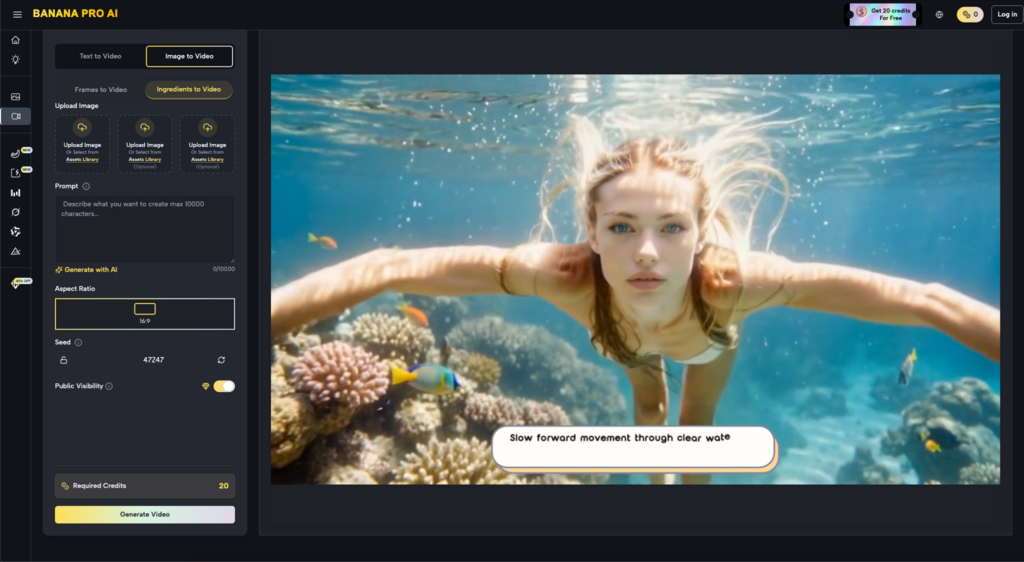

How Banana Pro AI Fits Latency And Cost Control

The transition from experimental AI generation to a standardized production pipeline is where most creative teams encounter their first real friction. It is one thing to generate a single, high-quality image for a presentation; it is an entirely different challenge to generate four hundred variations for a dynamic ad campaign or an entire library of social media assets. In this high-volume environment, the abstract promise of “AI efficiency” meets the hard reality of compute costs and inference latency.

When teams evaluate a tool like Nano Banana Pro, the conversation usually shifts away from pure aesthetic capability and toward operational sustainability. The goal is no longer just about who can make the prettiest picture, but who can do it at a speed and cost that doesn’t cannibalize the project’s margin. This requires a nuanced understanding of how different models within an ecosystem—such as the balance between specialized speed-focused models and heavier, more robust engines—interact to create a cohesive workflow.

The Latency Tax in Creative Operations

Latency is often the silent killer of creative momentum. In a professional setting, a 30-second wait for a single generation might seem negligible, but when multiplied across a team of ten designers performing iterative tasks, those seconds aggregate into hours of idle time per week. This “latency tax” discourages experimentation. If a creator knows a generation will take a significant amount of time, they are less likely to iterate on small details, leading to a “good enough” mentality that eventually erodes the quality of the output.

Nano Banana Pro is positioned as a direct response to this bottleneck. By prioritizing lower inference times, it allows for a more fluid, conversational style of creation. This is particularly relevant when using an AI Image Editor for tasks like in-painting or rapid prototyping. The ability to see a result in a matter of seconds rather than minutes changes the psychology of the user from a passive observer to an active director. However, this speed is rarely free of trade-offs.

Architectural Trade-offs: Speed vs. Complexity

The engineering behind Nano Banana involves significant optimization of the diffusion process. Standard high-fidelity models often require a high number of sampling steps to resolve fine details, such as realistic skin textures or complex architectural geometry. To achieve the speeds seen in Nano Banana, these steps are often compressed or distilled.

From a practical standpoint, this means that while the model is exceptionally fast, it may lack the “depth” found in heavier models like Seedream 5.0 or specialized high-resolution engines. For instance, in our testing, we have observed that while the Nano Banana model excels at maintaining composition and color theory at high speeds, it can sometimes struggle with extremely high-frequency details—such as distant text in a background or the intricate interlocking of jewelry. This is a moment of necessary uncertainty: we cannot yet guarantee that a speed-optimized model will perfectly replicate the fine-motor-skill nuances of a model three times its size. Teams must decide where the “quality floor” lies for their specific use case.

Cost-Per-Asset: The Financial Overhead of Scale

For performance marketers and agency leads, the cost of AI generation is a line item that demands scrutiny. While many platforms offer “unlimited” plans, these often come with hidden “fast hours” or throttling that kicks in just as a team hits its stride. When deploying Banana Pro across an organization, the focus shifts to the cost-per-successful-asset.

A successful asset isn’t just the final image; it’s the sum of all the discarded iterations that led to it. If a high-end model costs ten times more per generation than Nano Banana Pro, but only produces a usable result 20% more often, the math quickly favors the faster, cheaper model for the initial 80% of the creative process. This tiered approach allows teams to “burn” compute on exploration and rough drafts using optimized tools before committing expensive credits to the final, high-resolution polish.

The Canvas Workflow: A Tactical Advantage

One of the most effective ways to manage both time and cost is to move away from the “prompt-and-pray” method and toward a spatial workflow. The presence of a dedicated Banana AI canvas environment changes how assets are constructed. Instead of trying to generate a perfect scene in a single pass, creators can use the speed of the Nano Banana Pro model to generate individual components—backgrounds, subjects, and lighting effects—separately.

This modular approach is significantly more cost-effective. If a creator needs to change just the lighting on a character’s face, using a high-speed AI Image Editor to in-paint that specific area is faster and cheaper than re-generating the entire 4K image. It also reduces the creative risk; you aren’t gambling the entire composition on every new prompt.

Integrating Batch Processing into the Pipeline

Batching is where the scalability of Nano Banana truly shines. For e-commerce applications, such as generating lifestyle backgrounds for thousands of product SKUs, the sheer volume makes high-latency models unfeasible. A team can set up a workflow where the initial “heavy lifting” of background removal and composition is handled by automated scripts, and the creative “flavoring” is added via Nano Banana.

This brings us to a second point of limitation: reproducibility. In high-speed, low-cost environments, there is often a slight increase in variance between generations compared to slower, more “stable” models. When running a batch of 500 images, a creator might find that 10% require manual intervention due to artifacts that a more computationally expensive model might have smoothed over. Understanding this “fail rate” is crucial for accurate resource planning.

Identifying the Inflection Point for Quality

The real skill in managing an AI-driven creative team lies in knowing when to switch gears. Nano Banana is the workhorse for the ideation and mid-production phases, but there is an inflection point where the limitations of a fast model become a liability.

For high-profile hero assets—billboards, website headers, or premium print media—the strategy should involve upscaling or “refining” the Nano Banana output through a more robust engine. This “hybrid workflow” leverages the cost savings of the fast model for the 90% of the work that is structural, while reserving the expensive compute for the final 10% that requires aesthetic perfection. This prevents the “AI look”—that overly smooth, plastic texture often associated with under-calculated diffusion—from making it into the final product.

The Role of the Prompt in Cost Control

Prompt engineering is often treated as a creative exercise, but it is also a financial one. A poorly worded prompt leads to wasted generations, which translates directly into wasted budget and time. By using structured tools like the Banana Prompt feature, teams can create templates that maximize the effectiveness of the Nano Banana Pro model.

Standardizing prompts across a team ensures that everyone is working from the same baseline of quality. It reduces the “lottery effect” of generative AI. If a team knows that a specific set of descriptors works perfectly with the Nano Banana architecture, they can replicate that success across multiple projects without the trial-and-error that typically drains a credit balance.

Practical Judgment: When Speed is the Only Metric

There are certain scenarios where quality, while important, is secondary to immediacy. In real-time marketing—responding to a trending topic or a live event—the ability to generate a visual asset in five seconds vs. fifty seconds is the difference between being part of the conversation and being yesterday’s news.

In these instances, the “good enough” output of a fast model is actually superior to the “perfect” output of a slow one because the window of relevance is so narrow. We see this frequently in social media “war rooms” where the volume of content outweighs the need for gallery-grade resolution. The Nano Banana engine is built specifically for this type of high-velocity deployment, where the lifecycle of the asset is measured in hours rather than months.

Managing Technical Uncertainty in the Cloud

It is also vital to maintain a level of skepticism regarding “guaranteed” performance. All cloud-based AI tools are subject to the fluctuating availability of GPUs. Even with a dedicated plan, peak global usage times can impact latency. A production-savvy lead will always account for “buffer time” in their project schedules, even when using high-speed models.

Furthermore, the “black box” nature of proprietary models means that updates can occasionally shift the aesthetic output of a model overnight. What worked for a brand’s specific “look” on a Tuesday might produce slightly different color weights on a Wednesday. This is why a tool-savvy team maintains a flexible library of styles rather than relying on a single, rigid prompt structure.

Conclusion: Building a Sustainable Ecosystem

Ultimately, the deployment of AI visual workflows at scale is not about finding the “best” model, but about building the most resilient ecosystem. Nano Banana Pro serves as a critical layer in that ecosystem, providing the speed and cost-efficiency required to make AI more than just a novelty.

By balancing the rapid-fire capabilities of the Nano Banana architecture with the high-fidelity refinement of the broader platform, teams can finally move past the bottleneck of manual asset creation. The goal is to reach a state where the technology fades into the background, leaving the creators free to focus on strategy and storytelling, confident that the infrastructure behind them—whether it’s the canvas-based AI Image Editor or the high-speed generation engines—is optimized for both their creative vision and their bottom line.

The shift toward this industrial-scale mindset is what separates the teams who are merely “playing” with AI from those who are truly integrating it into their commercial success. It requires a cold, practical look at latency, a disciplined approach to cost, and a willingness to navigate the inherent uncertainties of a rapidly evolving field.